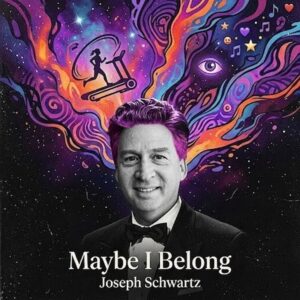

Joseph Schwartz

Blending AI innovation with human instinct, Joseph Schwartz explores identity, creativity, and belonging through a “Hybrid-Human” approach, crafting emotionally driven, genre-defying music rooted in bold experimentation.

1. Your “Hybrid-Human” workflow is central to your identity as an artist. How did this approach first take shape, and what drew you to combine AI generation with manual production?

I started creating music as an exploration of how much AI could elevate the skills of a non-musician to make “viable” music. Each song became an experiment in process, expression, sound and tools. My first songs followed a process that I thought of as “direct-and-curate”, built from a listener’s perspective, constantly validating whether the output was interesting to me. That included cropping interesting parts of generations and extending or regenerating with a core that was interesting to me. Ultimately, I started realized that a DAW (I use Audacity) was helpful for a few things:

Refining a sound that I wanted, e.g. the voice in “Ready for Doom (acoustic version)”

Adding finishing touches, e.g.volume normalization, fade-ins, bringing vocals forward, and removing some AI artifacts

As the algorithm starts to penalize fully AI tracks, I am currently trying to assess whether algorithmic bias will give my tracks the same opportunities as traditional production

2. You mention going through hundreds of AI generations before finalizing a track. What are you listening for in those moments that tells you, “this is the right DNA”?

The key questions are: Do I like it? Do I want to listen to it again? Does the music support the lyrics in an interesting way?

One of my approaches to writing is to write a chorus or verse/chorus and make 30 or so generations, e.g. 8 genres x 4 tries. I keep the ones that have potential and then start to build from there, maybe cropping and extending, maybe regenerating with more details in the prompt, maybe fixing lyrics so the cadence and impact work better. This is an iterative process. Sometimes, probably too often, I can’t decide what is my best final version, so I release multiple versions and see if one resonates better with other people.

3. The album carries a strong emotional narrative about creative struggle and belonging. How personal is this story, and were there specific moments in your life that directly inspired certain tracks?

It is a mix of personal feelings and experiences, along with feelings that I have read repeatedly in social media posted by artists and musicians. “What is art?” is just a straight-up rant about the anti-AI bias. If I feel like I have to repeat my rant many times, and I get sick of hearing myself say (or think) the same thing over and over, I make a song about it. When I wrote “Poison Darts”, I found I was mired in social media negativity and decided it was more healthy to just write a song about what I was feeling. “Vanity” was a reworking of another song from my “Seven Deadly Sins” album that was purely an experiment in creating songs narrated by people that embodied sins and exposed their impact.

“Treadmill of hope” was more of an amalgamation of feelings that I heard other artists express, along with some elements that resonated personally.

The title track “Maybe I belong” started purely as an experiment to see if I could transform a classical piece into something interesting and different. I loved the sound, so I decided to add lyrics. It felt powerful, so I thought overcoming imposter syndrome would be great subject matter (and fitting for a Rachmaninoff piece since it was something he experienced). It was right at the time when I was seeing some growth in Spotify and so I would say it was a dramatization of my personal experiences.

4. Tracks like “Treadmill of Hope” and the title piece draw from very distinct influences. How do you balance classical inspiration and modern rock energy without losing cohesion across the album?

Last year, I was releasing albums with wildly different sounds on them. This was purposeful because it was fun for me, but I realized it probably could not be appreciated by a listener that didn’t already understand me and my mindset and there were about zero of them in the world.

This album was my first attempt to create an album that told a story and was sonically cohesive. All of the songs are reworked, remixed or remastered versions of previously released tracks.

I realized they could tell a story of hope, struggle, growth and confidence and the lyrics as well as the musical progression could support a coherent, hopeful resonant story, even for traditional musicians that may never listen because it uses AI.

5. There’s an ongoing debate around AI in music, especially regarding authenticity. What would you say to critics who question whether AI-assisted work can truly be considered “human” art?

First, I would point them to the song “What is art?”

People have always used increasingly sophisticated tools to create art. My belief is that if a piece makes the listener think or feel, it is art. Everyone has the right to judge whether they like a piece of art, but to try to constrain art because of process is the antithesis of art. I have been told listening to some of my songs made people cry. That is a high compliment. I have always wanted to create songs that start conversations. I prefer it to be about my messages, but if it is about my process, that is OK too.

I think that with the exception of legendarily talented and hugely financed musicians, most music made is a triumph over the constraints affecting a musician: time, money, skills. Dealing with an unreliable drummer, cost of studio time, access to a virtuoso violinist for a 20 second part of a song are a few of a million challenges that sit between an idea and a finished track. My hope is that musicians don’t use AI to replace what they can do, but use it to remove the constraints blocking them from achieving bold visions.

I think “Maybe I belong” and my EP “Superhuman” are extreme examples of being able to create pieces that most musicians just can’t do. The irony is that when I hear them, my first thought is “I would love to see that performed live”.

6. Since you focus on studio creation rather than live performance, how do you envision the future of presenting your music—could we see virtual shows or new digital formats becoming part of your artistic expression?

I honestly don’t know. My dream is to hear humans play my music. I would be perfectly happy just being a songwriter. I am happy if I am the only one listening to my music. I think there is a difference between song creation and performance. Performance is not my focus.

I am not a born performer, so I am not as driven as I would need to be to create the kind of performance that would be required to really engage people. It would be amazing to work with someone that is one day. I don’t think I will be that kind of breakthrough artist. As far as I can tell, Twenty One Pilots could incorporate AI music and no one would know and it would be accepted since they already use tracks and create incredible music and performances. I recently saw a David Byrne show where he elevated the performance to be primary and the music played a role.